Foundations of LLMs

Fine-Tuning Pretrained Models

- Text Processing and Generation

- Retrieval-Augmented Generation (RAG) & Semantic Search

- Fine-Tuning LLMs

Model Evaluation

Sentiment analysis is a powerful Natural Language Processing (NLP) technique that helps in determining the emotional tone behind textual data. In this blog, we will explore how to classify movie reviews as positive or negative using two approaches: task-specific models and embedding models. We will work with the “rotten_tomatoes” dataset from the Hugging Face Hub to demonstrate both techniques.

Text Classification with Representation Models

Dataset Overview

The “rotten_tomatoes” dataset contains a balanced set of 10,662 movie reviews, split into:

- Training set: 8,530 reviews

- Validation set: 1,066 reviews

- Test set: 1,066 reviews

The labels are binary, where 0 represents a negative review, and 1 represents a positive review.

!pip install datasetsLoading the Dataset

from datasets import load_dataset

# Load the Rotten Tomatoes dataset

dataset = load_dataset("rotten_tomatoes")

print(dataset)Method 01: Using a Task-Specific Model

A task-specific model is a representation model fine-tuned on a particular task, such as sentiment analysis. These models are trained on labeled data to classify text directly.

Selecting a Task-Specific Model

For this approach, we will use distilbert-base-uncased-finetuned-sst-2-english, a DistilBERT model fine-tuned on sentiment analysis tasks.

Loading the Model

from transformers import pipeline

# Define the model path

model_path = "distilbert-base-uncased-finetuned-sst-2-english"

# Load the model into a pipeline

classifier = pipeline("sentiment-analysis", model=model_path)

# Performing Sentiment Classification

import numpy as np

from tqdm import tqdm

# Perform inference

y_pred = []

for review in tqdm(dataset["test"]["text"]):

output = classifier(review)

sentiment = 1 if output[0]["label"] == "POSITIVE" else 0

y_pred.append(sentiment)Output:

|

Method 02: Using an Embedding Model

Instead of using a task-specific model, we can use an embedding model that converts text into numerical vectors (embeddings). These embeddings can then be used for classification with a machine learning model.

Selecting an Embedding Model

For this approach, we will use sentence-transformers/all-MiniLM-L6-v2, which generates efficient text embeddings.

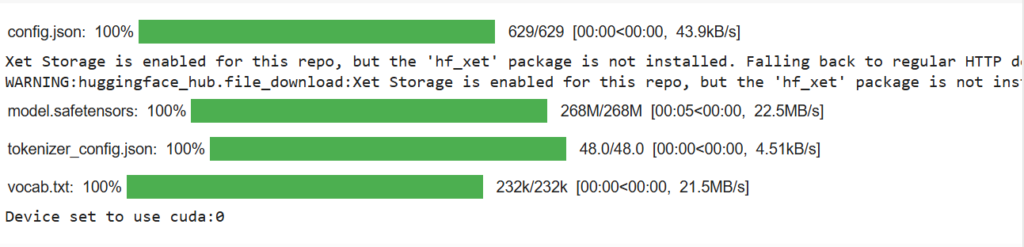

Loading the Embedding Model

from sentence_transformers import SentenceTransformer

# Load the embedding model

embedding_model = SentenceTransformer("sentence-transformers/all-MiniLM-L6-v2")Output:

|

Generating Embeddings for Movie Reviews

# Convert test reviews into embeddings

test_embeddings = embedding_model.encode(dataset["test"]["text"], convert_to_tensor=True)Training a Classifier Using Embeddings

We can now use these embeddings to train a classifier, such as Support Vector Machine (SVM).

from sklearn.svm import SVC

from sklearn.metrics import classification_report

# Train SVM classifier on training data

X_train = embedding_model.encode(dataset["train"]["text"], convert_to_tensor=True)

y_train = dataset["train"]["label"]

classifier = SVC(kernel='linear')

classifier.fit(X_train.cpu().numpy(), y_train)

# Predict sentiment on test data

y_pred_embeddings = classifier.predict(test_embeddings.cpu().numpy())Evaluating the Models

We can measure the performance of both models using precision, recall, accuracy, and F1-score.

# Define evaluation function

def evaluate_model(y_true, y_pred):

report = classification_report(y_true, y_pred, target_names=["Negative", "Positive"])

print(report)

# Evaluate the task-specific model

evaluate_model(dataset["test"]["label"], y_pred)

# Evaluate the embedding-based model

evaluate_model(dataset["test"]["label"], y_pred_embeddings)Output:

| precision recall f1-score support Negative 0.89 0.90 0.90 533 Positive 0.90 0.89 0.90 533 accuracy 0.90 1066 macro avg 0.90 0.90 0.90 1066 weighted avg 0.90 0.90 0.90 1066 Negative 0.78 0.78 0.78 533 Positive 0.78 0.78 0.78 533 accuracy 0.78 1066 macro avg 0.78 0.78 0.78 1066 weighted avg 0.78 0.78 0.78 1066 |

Understanding the Results

- The task-specific model is optimized for sentiment analysis and typically performs well out of the box.

- The embedding model provides flexibility, allowing us to use the generated text embeddings for multiple tasks beyond sentiment analysis.

- If higher accuracy is needed, fine-tuning either model on domain-specific data (e.g., movie reviews) can further improve performance.

Both approaches have their advantages. Task-specific models are easier to use, while embedding models offer more flexibility for multi-task applications. Depending on your use case, either approach can be an excellent choice for sentiment classification.

What’s next? Future improvements could include fine-tuning models on a larger movie review dataset or exploring more efficient transformer architectures.

Sentiment analysis plays a crucial role in applications such as customer feedback analysis, social media monitoring, and market research. By leveraging these NLP techniques, we can gain valuable insights from textual data!